Why EHRs are so buggy & medical knowledge changes so slowly

Why is healthcare IT so often bad? And why does it take so many years for medical research to be implemented and reach patients? And most importantly: how are these two questions connected?

Why are electronic health records (EHRs) so bad?1 And why does it take so long for healthcare to adopt new knowledge?

Here’s a true story from my time at an emergency department (originally published here).

Pagers, PEs & Problems

One Saturday night at the emergency department I meet a 79-year-old woman struggling to breathe. After ruling out other dangerous causes, I’m left with one possible and scary diagnosis - a pulmonary embolism (PE).

I assess the risk for PE using something called a Wells’ score, which tells me that the patient is at low risk for having a PE. For low-risk patients, a normal blood test (called D-dimer) can virtually rule out a PE.

So I order one. But unfortunately her D-dimer is 0.37 mg/l (higher than the 0.25 mg/l cutoff). I therefore order a CTPA scan (a type of X-ray) in the electronic health record. I enter my phone number in the computer’s referral system in the box labelled “phone number”. Two hours later a nurse asks me to go answer a call on another phone on the other side of the emergency room. The radiologist has been trying to reach me for an hour to discuss the patient's kidney function. I pick up the phone and answer:

- Wait, why didn’t you call my personal number? I wrote my number in the box labeled phone number.

- Oh yeah, but we can’t see anything anyone writes in that box. Write your number anywhere but there in the future.

Many patients are waiting for an x-ray. After five hours I get the answer: no PE. I send the patient home.

The next patient is a man with a bleeding stomach ulcer. I page an endoscopy consultant and an answering machine says »Please hold, paging ongoing«. The voice repeats itself and I wait. Nothing happens. After 5 minutes I hang up and try calling again. No answer. I try again. A colleague asks:

- What are you doing?

- I'm trying to get hold of the endoscopy consultant and the system just keeps asking me to wait.

- Oh, but it always says that. You need to hang up after the voice has said that once.

It’s 23.30. I’m lying in bed and I just can’t stop thinking about why the healthcare IT systems don’t work. I have Franz Kafka's The Trial on my bedside table.

If the EHR instructs me to "write your number here" - then I shouldn't write my number there. If the pager system asks me to wait - then I shouldn’t wait. How much time do healthcare systems waste on bizarre IT errors that benefit no one?

This made me start thinking more about what we spend time on in healthcare. And I painfully realized that I, too, had wasted a lot of time on unnecessary things.

The woman didn’t need the x-ray. The cutoff for a normal D-dimer (the test that made me order the x-ray) actually increases with age. At that point in time, several larger studies had already shown the validity of age adjusting D-dimer, i.e. using a higher limit for older patients. That increases the specificity for pulmonary embolism without losing sensitivity.

If I had acted on the best available knowledge, the patient would have avoided an unnecessary examination. Also, the unnecessary x-ray didn’t only waste five hours for the patient, but 30 minutes for the nurse, 20 minutes for the radiology nurse, 30 minutes for the radiologists, 45 minutes for the porter, and 30 minutes for myself. Without helping anyone in any way.

Confusing IT systems waste time in a bizarre manner. But X-raying patients based on a test result that is actually entirely normal is also Kafkaesque. I shifted from feeling righteously frustrated with the IT systems to realizing that I’ve also contributed to ineffective care, without even knowing it. Don’t I, like those who create IT systems, have a responsibility to ensure that time is spent on the right things?

But age-adjusted D-dimer wasn’t included in our 2015 guidelines. What should you do if new research is published, but guidelines or Cochrane reviews haven't been updated? Blindly follow the old guidelines, or after assessing the literature, challenge the norm and independently follow newer findings?

When this essay was published in 2016, I was grappling with ineffective, poorly designed IT systems and colleagues reluctant to update their knowledge base. Several large studies had proven the utility and safety of age-adjusted D-dimer.2 However, my colleagues were more afraid of deviating from guidelines than doing what extensive research had demonstrated was best. I was experiencing, first-hand, the oft-cited 17 year gap between research findings and their application in clinical practice.3

I was frustrated - both of these problems (bugs in IT systems and a long time until research reaches clinical use) seemed like examples of larger anti-progress problems that were worth solving in order to accelerate other progress.

In hindsight, I wish I had known what I know today. Unfortunately, these bugs are to be expected in electronic health records (EHRs) and other big healthcare IT systems. Delays in applying the most recent knowledge are to be expected in healthcare. And moreover - this will most likely always be the case.

This may sound odd, but bear with me. If you do, you’ll hopefully become less frustrated and also find out how this is useful for understanding other complex systems.

II. EHRs will always be complex, slow to change, and buggy

Electronic Health Records (EHRs) are computer programs used by clinical staff to manage patient health data, and are generally used both to document new data (e.g. current medical notes) and show old data (e.g. old notes or lab tests). Whenever your doctor looks through old notes, or checks your blood tests, or sends a referral - they’re using an EHR.

EHRs have lots of bugs. And bugs in a central IT system can have severe consequences. An example from Death By 1,000 Clicks: Where Electronic Health Records Went Wrong:

Ronisky … arrived by ambulance at Providence Saint John’s Health Center in Santa Monica on the afternoon of March 2, 2015. For two days, the young lawyer had been suffering from severe headaches while a disorienting fever left him struggling to tell the 911 operator his address.

Suspecting meningitis, a doctor at the hospital performed a spinal tap, and the next day an infectious disease specialist typed in an order for a critical lab test — a check of the spinal fluid for viruses, including herpes simplex — into the hospital’s EHR.

The multimillion-dollar system, manufactured by Epic Systems Corp. and considered by some to be the Cadillac of medical software, had been installed at the hospital about four months earlier. Although the order appeared on Epic’s screen, it was not sent to the lab. It turned out, Epic’s software didn’t fully “interface” with the lab’s software, according to a lawsuit Ronisky filed in February 2017 in Los Angeles County Superior Court. His results and diagnosis were delayed — by days, he claimed — during which time he suffered irreversible brain damage from herpes encephalitis.

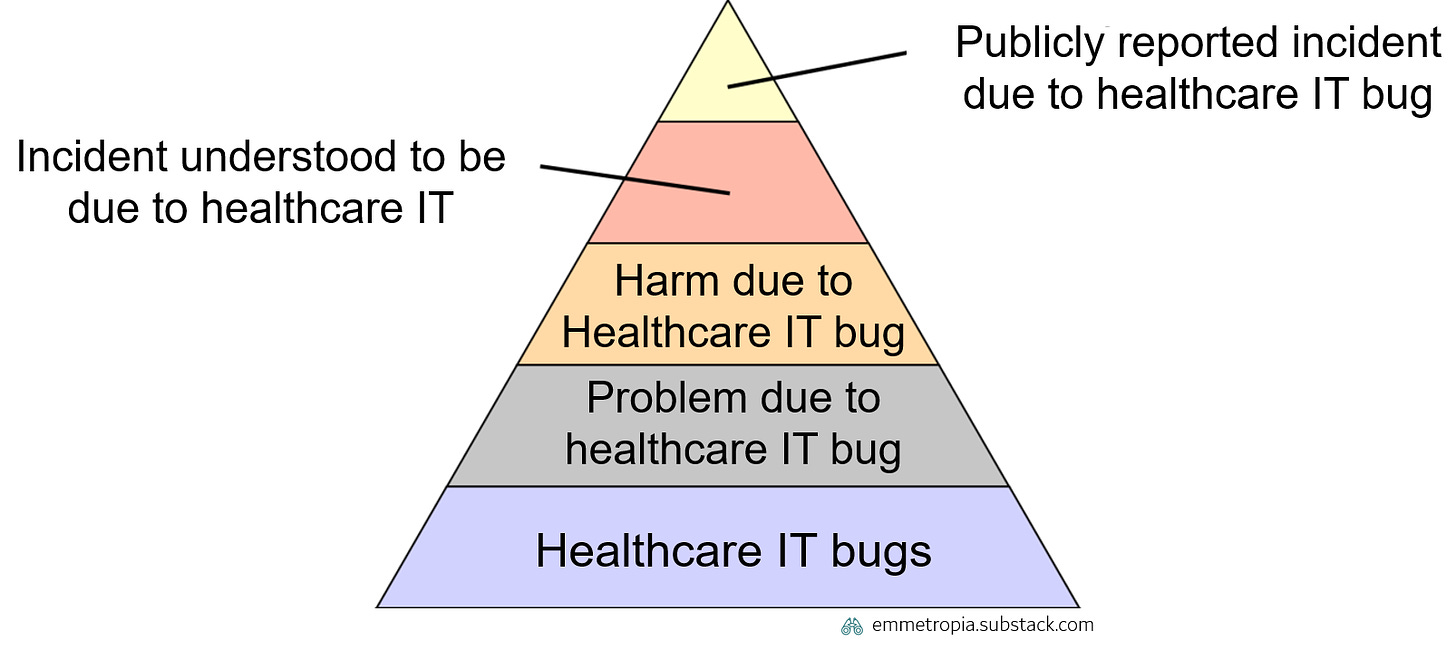

IT bugs are common. For each publicly reported incident due to a healthcare IT bug (top segment of the pyramid), there are many more bugs which are experienced by clinicians on a daily basis (bottom segment of the pyramid).

When a large or important bug is detected in an EHR it is often fixed rapidly. A contract between an EHR vendor and a provider will often state that serious bugs have to be addressed within a certain time span. However, in general, you don’t have the same time constraints for minor bugs.

Wait, what? Why not simply demand a perfect system? Well, providers could demand that, but no EHR supplier would ever dare to sign that contract. This dynamic is unavoidable if the following principles hold true.

A growing scope and number of stakeholders with different needs

Different users with different needs: Many EHRs are used by a growing number of different types of users (e.g. doctors, nurses, physiotherapists) across different specialties (e.g. emergency medicine, surgery, oncology) and different settings (e.g. inpatient and outpatient). The need for coordination across a health system increases as each unit becomes more specialized and an individual patient visits a greater number of clinics. Different clinicians might see the same patient note in the EHR, but have very different needs, and focus on different types of information. This means that there will be an increasing number of different demands on functionality in the EHR from different users.

Scope growing over time: The scope of EHRs has grown over time. There was a time when lab systems, radiology systems, referral systems and ICU systems were separate systems from the EHR. Today many EHRs are expected to contain such capabilities. As EHRs are purchased by tenders, hospital systems (which prefer to have such systems integrated) can specify this in the tender, which forces EHRs to expand their scope.

Why is this important? This growth in scope and needs means that all possible permutations of features can’t be fully tested in advance. Not that it’s tricky, but that it isn’t possible.

As EHRs grow in size and functions, you get a (literally) exponential combination of things that can happen, which makes it impossible to bug-test every combination in advance. For example, let’s imagine an extremely simplified EHR that only has 15 different variables with 4 states for each, e.g. if a patient is in a ward, an inpatient-clinic, an outpatient-clinic or at home, if someone else is editing their chart, if they have been tagged for surgery or for a certain clinical pathway etc. Just that generates over 1 billion different permutations. In reality that are many more variables. How many of these billion permutations are providers ready to pay EHR suppliers to test in advance? Well, a limited number, due to:

Cost control: Healthcare providers could spend an infinite amount of resources on developing a comprehensive EHR with even more functions and testing a greater number of these. But resources are limited, and healthcare providers want to pay as little as possible for this. EHRs are therefore often procured via big tenders where providers list functions that the system must have, and where the total price is an important factor. Defining these functions is very tricky.4 This incentivizes suppliers to provide just the “right” amount of functionality and bug-fixing service to avoid critical and visible flaws/bug. However, promising too much bug-fixing runs the risk of costing the vendor a lot, which in turn will increase the price during a tender and not winning the contract. Moreover, there is no incentive to create a perfect system - as that will be difficult for users to detect when comparing EHRs in a tender, and therefore not something that is reflected in the price. At the same time EHRs are…

Central for clinical processes, but painful to change: EHRs provide patient information which is needed for providing healthcare - that’s why they’re smack in the middle of nearly all clinical processes where patient information is important. You don’t want to use an old inefficient EHR, when you could switch to a newer version that is more up to date. However, as it is so central, you don’t want to change too often as its painful (you have to educate staff, reintegrate lots of systems, change habits etc).5 Suppliers know the providers have a very high switching cost, and know that minor bugs won’t cause the provider to change to a new system.

So, to sum it up:

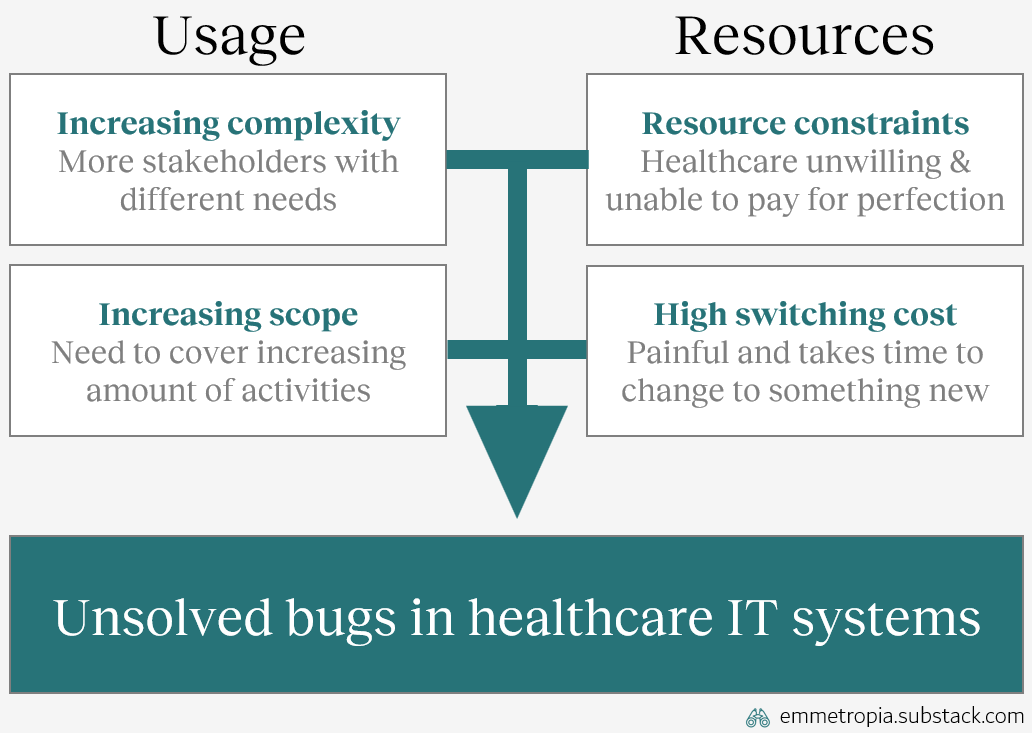

Small unsolved errors are a consequence of the demands placed on EHRs. Those demands are shaped by an increasing complexity (where all permutations of real-life usage can’t be tested), an increasing scope, resource constraints (providers aren’t ready to pay to solve the large number of minor errors in advance) and high switching costs to replacing EHRs.

Or summarized in a picture:

So why is this interesting? These factors also explain why it takes so long time for medical research to reach practice.

III. Medical knowledge will always be complex, slow to change, and buggy

Medical knowledge is a thing of beauty6. Extremely simplified:

Researchers do experiments and publish their results in medical journals

Expert clinicians look at what’s published and summarize that knowledge into new guidelines

Guidelines are used by clinicians to help them in their decision-making

And over time - healthcare makes incredible, important and inspiring progress

The tricky thing is that science is seldom perfect, and we often draw conclusions that aren’t true. Knowledge is often spread rapidly when a new study finds a big or important gap in knowledge7. Clinicians want to give the best possible care to their patients, and especially don’t want to do something that hurts them.

However, for less important topics (i.e. not life-or-death, or severely disabling), change can take a longer time. Why? Well, it’s complicated. But simplified:

A growing scope and number of stakeholders with different needs

Different users with different needs: Over time, our knowledge base has expanded. Different clinicians (e.g. doctors, nurses, physiotherapists) across different specialties (e.g. emergency medicine, surgery, oncology) may all interact with a patient. When attempting to understand whether a treatment or procedure is better/worse than an alternative there are now many more perspectives on and alternatives in how to treat a patient. Different clinicians might see the same medical studies on a certain topic, but focus on different parts or reach different conclusions due to different needs and perspectives.8

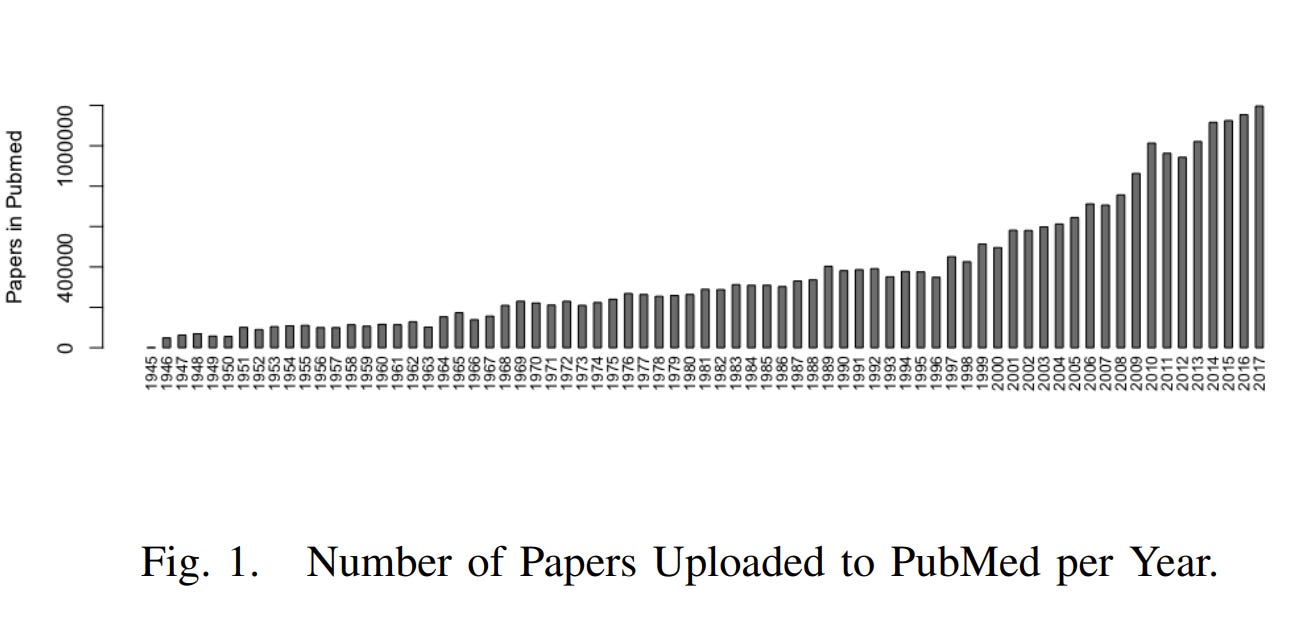

Scope growing over time: The scope of medical knowledge, in terms of the topics that we explore scientifically, has grown over time. The number of publications in the medical database PubMed has increased significantly. The graph below shows the number of new papers uploaded to PubMed per year.

Why is this important? This growth in scope and needs means that all possible permutations of this applied knowledge can’t be fully tested in advance.

As healthcare systems start having new things (e.g. new treatments, interventions professional roles), we get a (literally) exponential combination of things that can happen, which makes it impossible to study every combination in advance.

For example, let’s imagine an extremely simplified healthcare system, where all patients only have 15 different variables (age, gender, if they have any other conditions, current medications allergies, etc) with just 4 states for each. Just that generates over 1 billion different permutations. In reality there are many more variables. In other words, a billion different permutations where a treatment or process can have different effects. How many of these billion permutations are healthcare providers ready to pay researchers to test in advance? Well, a limited number, due to:

Cost control: Healthcare providers could spend an infinite amount of resources on studying every single condition and treatment in every single subgroup. However, resources are scarce, and in general healthcare providers want to pay as little as possible for this. Researchers often get more financing based on if they publish in high impact (i.e. fancy) journals. The best way to get published in a fancy journal is to study some really cool or important topic. This incentivizes researchers to focus on solving critical and important gaps in knowledge, and spend less energy on more mundane and minor topics. Moreover, medical knowledge is...

Central for clinical processes, but painful to change: Medical knowledge is central for providing healthcare - that’s why they’re smack in the middle of all clinical processes. Clinicians don’t want to use old inaccurate knowledge, when you could use newer knowledge that is more up to date and more accurate. However, as knowledge is so central, providers can’t change too often as you have to update lots of guidelines and change habits. Consequently, you have certain barriers before adopting or assessing new knowledge. These switching costs are high. Moreover, if there is a dogma or well-established “truth” in place, the barrier for change is even higher.9

So, to sum it up:

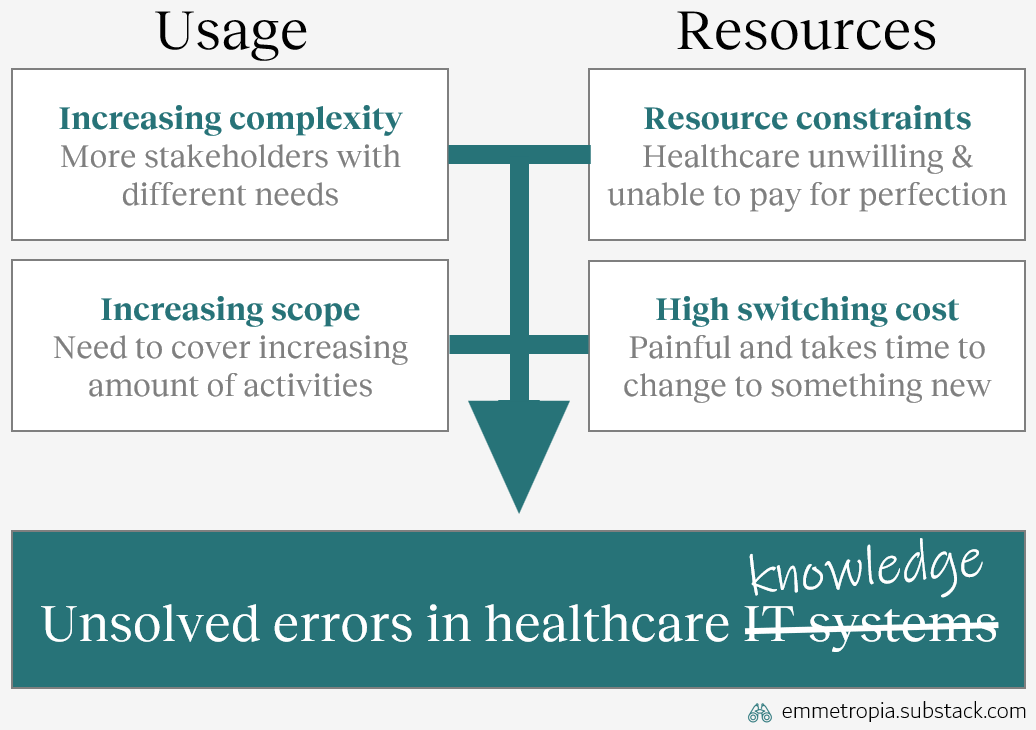

Small unsolved errors are a consequence of the demands placed on medical knowledge. Those demands are shaped by an increasing complexity (where all permutations of real-life usage can’t be tested), an increasing scope, resource constraints (providers aren’t ready to pay to solve the large number of minor errors in advance) and a high switching costs to replacing medical knowledge.10

Or summarized in a picture, which you hopefully recognize:

IV. The “So What”

Right now, the reasonable question to ask is: so what? Why does it matter that there are similar underlying reasons for why medical knowledge and EHRs can have minor errors that are detected but not addressed?

Well, I believe this mental model can be useful, and that…

Understanding the similarities can decrease frustration when

Facing EHR bugs: I think it’s easier to understand that an annoying bug might actually be minor, considering how many different EHR users and needs there are. There are probably bigger bugs that one simply hasn't encountered and which are being prioritised. Also, knowing that minor bugs are prioritized as highly as e.g. non-updated parts of clinical guidelines: it would of course be good to reassess it, but one needs to prioritize and there are other more important things to fix first.

Facing non-adherence to latest research: I think it’s easier to realize how extremely difficult it is for clinicians to incorporate new knowledge and adhere to best practice. Also, knowing that healthcare is a complex system with research on so many things, and with many (at times opposing) perspectives on how to weight different perspectives against each other.11

Also, that the problems can be alleviated by addressing underlying causes, such as…

Managing increased scope by de-aggregating, i.e. for example…

Tendering modular EHRs: There can be a large value in not designing tenders to be as broad as possible. The broader the EHR is, the larger the scope and the greater the complexity in the system will be. One can instead opt for a more modular approach, and instead require the different modules to communicate in predefined ways. This can decrease the risk of unsolved bugs within each module. The complexity is moved out from each module and instead lays between the modules. This tradeoff increases salient coordination costs (in planning and executing the tender), but decreases long-term switching costs.

Using local evaluations to assess the results of applied knowledge: It is impossible to test all combinations and permutations of medical knowledge in advance. In medicine, randomized controlled trials are often deemed to generate the highest type of evidence. However, they are limited by their strict inclusion and exclusion criteria, which result in patient populations that can greatly differ from other patient populations at any given clinic. Humanity can’t afford to run RCTs on every combination of diseases and treatments. However, local evaluations (such as local follow-up of outcomes or quality registries) can overcome this challenge. That kind of follow-up is highly relevant for the specific clinic performing them (in contrast to RCTs), and can show how well it works to apply general knowledge to that specific patient population – and thus drive meaningful improvements.

Managing high switching costs by for example…

Demanding semantic interoperability: Providers must demand that EHR suppliers fix minor bugs. But it is probably more efficient to address the underlying issue of complexity and high switching cost by demanding semantic interoperability.12 Interoperability means that different EHRs and IT systems speak a standardized language and more easily can be integrated to each other. This enables providers to procure different software modules that communicate with each other - rather than monolithic systems. This also reduces the switching cost and should make it easier to demand that more minor bugs get fixed.

Designing knowledge management structures to be continuously updated: Knowledge management structures entail how knowledge is passed from research publications to clinical practice (publications, guidelines, clinical decision support systems, local guidelines). If these structures are organic (i.e. no clear structure or hierarchy) then it can be difficult to update the knowledge. Investing resources in ensuring that knowledge structures are easier to continuously update (i.e. don’t just update every 4th year) can mitigate knowledge lag and can as impactful as investing resources in the research itself.

V. The Bigger “So What”

Finally, the bigger “so what”: these principles holds true for many other large, complex and interdependent systems. Can you think of other phenomenon characterized by:

usage with increasing complexity and growing scope

significant resource constraints and high switching costs?

Some examples to get you thinking:

Public transportation systems: Are often outdated at some point and underinvested with many bugs (areas with poor communication, or inefficient systems which could be much better). A increasing number of stakeholders and increasing scope (e.g. replacing cars to a greater extent) combined with high switching costs makes change slow, and that many minor bugs will persist over time

Energy sectors: Huge switching costs, high interdependency, an increasing number of stakeholders with various opinions, combined with resource constraints makes change slow, even if there are clear bugs to address (phasing in new technologies and improving inefficiencies)

Governmental agencies: High interdependency with other stakeholders, in general constantly growing scope, resource constraints, and high switching costs

These are complex phenomenon, but understanding the root causes underlying slow change can decrease frustration, and help one spend energy on the right problem.

VI. Emmetropes

Large, complex and interdependent systems will change slowly and have errors if they are growing, costly to test or central in many processes.

Slow change and long-lived myths/bugs are due to trade-offs, not ignorance or malevolence. Thus, a new corollary: "Never attribute to malice, stupidity or incentives, that which can be adequately explained by trade-offs across different dimensions."13

Some seemingly intractable problems can be addressed, if you shift to a different abstraction level and try to solve fundamental issues

Addressing root issues, such as increasing scope or switching costs, can have a greater effect than solely trying to speed up the change process (for fixing bugs or outdated medical knowledge)

More than half of physicians are dissatisfied with their EHR (AMA 2013), 75% of clinicians reporting one or more burnout symptom state that the EHR is a contributor (Tajirian 2020), with possible mechanisms being increased time demands, increased documentation burden, complex usability, increased cognitive load, and increased electronic messaging volume (Budd 2023). One of Emmetropia’s readers also sent me this article, in which low EHR usability correlated to clinician burnout (with a dose-response relationship). And on a personal level, I have yet to meet a clinician that appreciates their EHR and feels that it makes life easier for them😅

Today age-adjusting d-dimer is the norm, with several studies (published a decade ago) supporting its use: See for example Schouten 2013, the 2014 ADJUST-PE trial, Douma 2010, Mullier 2014 and many others

This number is incorrect, outdated, and not very useful. If you read the article, you’ll see that a problem with this is defining knowledge and when basic knowledge has been created and when it reaches clinical practice. However, it illustrates that there is a gap which is large, and often times unnecessarily large.

Healthcare providers have many different stakeholders and the people tendering new IT-systems cannot only be those working clinically (as there are many other legal, financial, regulatory and political aspects to manage, which clinicians aren’t exposed to or knowledgeable about). Different role can have different opinions on what is the “just right” amount of functionality. This can result in management thinking a system is “good enough” while clinicians find it terrible and unsafe for patients.

I realize this may sound weird, but I find mankind’s model for accumulating medical knowledge to be deeply beautiful in the same way that a painting, song or sunset can be beautiful. Humanity has somehow, despite all our intrinsic limitations, found a way to coordinate ourselves and systematically further our understanding of the life sciences - thereby giving humanity and our future generations more life. We have created a self-perpetuating dance which continues through generations. Yes, it is far from perfect - but it works. And our children, and our childrens’ children, and many generations thereafter will reap its benefits.

A common example is that way back (i.e. prior to 1991), we realized that patients that had suffered a heart attack often got arrythmias (heart beating irregularly) afterwards. Since irregular heart rhythms could lead to deadly cardiac arrests, those patients were treated with anti-arrhythmic drugs. It really made a lot of sense. That is until a large study showed that patients treated with those drugs actually died more often. In that case, guidelines changed quite quickly.

A bonus for you dear footnote reader: Interestingly, meta-analyses (studies that pool the results of other research) on the same studies can reach opposite conclusions! Some examples: Lindbaek & Hjortdahl (short example), Perego et al (a bit longer, but more explanations), Stegenga (long, but guaranteed to blow your mind if you work with medicine or science)

Sometimes it takes more than 17 years. Sometimes it takes 40 years and foolish doctor injecting something in his finger.

There are many other factors which affect the speed, direction and acceleration of medical research, but I’m saving that for another essay.

It’s difficult to study benefits from interoperability, due to the nature of studying a poorly defined, contextual and highly technical intervention. Nonetheless, I have difficulties believing that it doesn’t have significant downstream advantages. However, there are lots of challenges for realizing that kind of interoperability. This article is quite old, but captures several of the important challenges.

Hanlon’s Razor prevents misattribution: "Never attribute to malice that which is adequately explained by stupidity." Hubbard’s corollary to Hanlon’s Razor helps one see the how: ”Never attribute to malice or stupidity that which can be explained by moderately rational individuals following incentives in a complex system of interactions.” Hopefully, this corollary will help one see the why.