Large Language Models in Healthcare: Much More Than You Wanted To Know

How will LLMs impact clinical healthcare? How should LLMs be used? What risks need to be handled? And how can non-experts assess feasibility early on?

“How will Large Language Models (LLMs) change healthcare?”

Many people have asked me something like this during the past year. How will LLMs like ChatGPT change healthcare. I started noticing some patterns in all the conversations:

There are many misconceptions about what LLMs can and cannot do (“We can’t trust apps that just make up stuff!”)

There are just as many misconceptions about what we actually do in healthcare (“In the long run, this will replace doctors, right?)

That a proper answer to the question would take much more time than most people would have patience for….

So I wrote it down, and now I’m very happy to share this with you, dear reader.

If you care about healthcare and its development I’m convinced you’ll find this topic interesting. Also, healthcare systems play a central role in counteracting polarizing and segregating socioeconomical curses and taxes.1 So basically, if you care about the society you live in, then you should healthcare and what could improve healthcare - and then this should be quite interesting.

I’ve prepared three versions for you, depending on how curious you are:

1. Small (~30 sentences): Summary of main ideas. The main ideas are conveyed by the headers of the essay, so I just extracted the headers to make a summary. You can find that a bit further down.

2. Medium (~50 A5 pages): My essay published by Forum for Health Policy. You can download the pdf with illustrations, references (but no memes) here:

3. Large (15 minute read): The director’s cut version here on Emmetropia - with memes! 😄

So first, here’s the short summary:

Summary of main ideas

Healthcare has several core activities: applying, communicating and coordinating knowledge

Healthcare faces many challenges today

LLMs can solve challenges healthcare is facing

That’s it. A year of digging into research and conversating with experts summarized in a couple bullets. But as always, the really juicy devils are in the details.

Still curious? Read on. There’s a whole bunch of juicy LLM/Healthcare devils waiting for you.

1. Healthcare: applying, communicating and coordinating knowledge

To understand how large language models (LLMs) can affect healthcare provision, we must first understand the processes in providing healthcare - focusing on central activities that recur across different geographies and eras.

Healthcare provision is complex, contextual and intricate, and can be analysed in various ways.

However, some healthcare activities are universally relevant and important over time. This report will therefore focus on three universal core activities: applying, communicating and coordinating medical knowledge. Anything that impacts these three also impacts healthcare.

These activities are ubiquitous in all healthcare systems due to the asymmetry in knowledge and capabilities between patients and providers. Providers (should) know more than patients about diseases and treatments. If there was no asymmetry, then patients could take just as good care of themselves and there wouldn’t be any need to consult a healthcare provider. Understanding these activities in detail clarify why LLMs can have a large impact.

1.1 Communicating knowledge

Communication is a central activity in all healthcare provision. Communicating includes providers asking patients questions, listening to answers, answering questions, as well as explaining their assessments or other information. 40-50% of clinicians time is spent on communicating with patients, though this can vary greatly across specialties (Toscano, Sinsky).

The more medical knowledge providers have, the more clinical processes are standardized with regards to what questions should be asked and how patients should be assessed. During the past decades, the number and scope of clinical guidelines have increased (Dubois). An increasingly large part of conversations are thus standardized and contain repetitive questions or discussions.

1.2 Application of general knowledge

The second ubiquitous healthcare activity is providers learning from, drawing upon and applying general medical knowledge. Medical research and science generate general knowledge which clinicians need to judiciously adapt and apply to the specific patient that they wish to help. As time passes, the medical profession accumulates knowledge and gains an increasingly better understanding of the human body and its ailments.

Published medical knowledge is general as it has several types of limitations in how it can be applied. For example, after performing a study on a certain drug, we know that it has a certain average effect on the patients in which the effect has been studied. However, this knowledge doesn’t guarantee that we will see the exact same effect in another patient group (this is called limited external validity). A key process for healthcare provision is therefore acquiring relevant general knowledge, and then adapting and applying it to the specific patient in front of the healthcare provider.

The first step of this process is often facilitated by international, national or local guidelines, which make it easier for providers to know how to manage certain conditions. This general knowledge needs to be guided by clinical expertise and an understanding of the patient's unique situation, and is fundamental for evidence-based medicine. David Sackett, one of the pioneers of evidence-based medicine, describes it as follows:

“Good doctors use both individual clinical expertise and the best available external evidence, and neither alone is enough. Without clinical expertise, practice risks becoming tyrannised by evidence, for even excellent external evidence may be inapplicable to or inappropriate for an individual patient. Without current best evidence, practice risks becoming rapidly out of date, to the detriment of patients.” (Sackett)

This probably sounds mundane, but is actually really important and really tricky. Imagine that you’re seeking care at a weird GP clinic. However, in this case all their guidelines are based on research performed on quokkas.

Even if the guidelines and treatments work well for quokkas, that doesn’t mean that it’ll work well for you (assuming you dear reader are a human, and not a quokka). It would be very reasonable to be hesitant about how relevant those guidelines are for you.2 Real medical guidelines are fortunately based on data from research on humans. However, if the population on which a study was performed differs from you then its up to the treating clinician to judiciously adapt and apply that knowledge to your specific case.

1.3 Coordinating care

The third ever-present activity is the coordination of care of a patient, mainly through medical notes. This is often done through an electronic health record (EHR). This entails being aware of previous contacts with healthcare providers, other treatment plans or surgeries, and ensuring a common source of knowledge among the involved clinicians. The need for coordination and documentation increases as patients have more interaction with healthcare during a lifetime and more patient data is accumulated over time. The number of healthcare staff has increased per capita during the past decades, which most likely has contributed to a net increased need for coordination (OECD).

Fig 1.3. Core healthcare activity: coordinating care through documentation

1.4 Core activities are interdependent

Even though the activities above are discussed separately they are interdependent. Patient communication is the foundation for knowing what general knowledge to draw upon, and general knowledge guides clinicians regarding what to inquire about. Retrieving information from the EHR similarly affects what knowledge to draw upon and what to discuss with the patient. Inversely, general knowledge and patient communication affects what we document in a medical note.

Fig 1.4. Three universal and central healthcare activities

2. Healthcare faces many challenges

Healthcare faces many different challenges. In this section we will focus on a few major challenges prevalent across all developed healthcare systems, as well as the challenges’ underlying root causes, and their interdependencies.

2.1 Lack of clinicians to meet growing demand

The world lacks around 10-15 million healthcare practitioners according to the WHO (Boniol). This figure may seem abstract, but is experienced in many countries in the form of long queues to access healthcare, medical incidents and burned out medical staff. Why are we seeing this shortage? One way to understand this shortage is to consider the underlying factors that drive healthcare utilization.

No metric can fully capture total healthcare demand, but some key factors drive demand. Chief among them are the number of inhabitants in a country, average life expectancy (as we consume more healthcare as we grow older) as well as the range of medical treatment available. Similarly, while provision of healthcare can’t be summarized in a single metric, a country’s total healthcare expenditure says something about how much is being provided.

Summarized conceptually and very imperfectly:

Total healthcare demand = Population × Average life expectancy × range of medical treatment theoretically available

Total healthcare supplied = Healthcare expenditure per capita

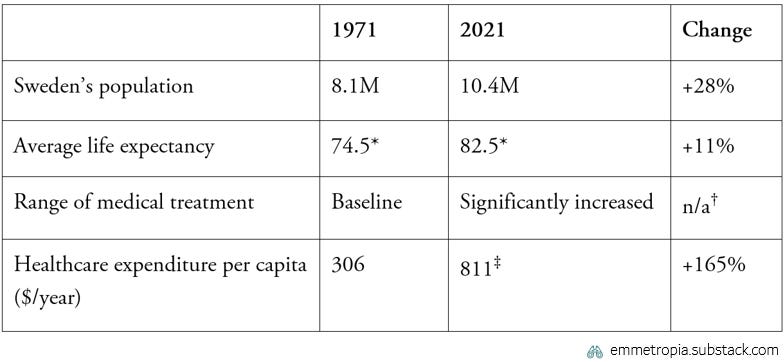

Historically supply has increased, reflected in growing healthcare expenditure (Our World in Data). However, demand for care has grown even faster, due to an aging population, with concurrent increases in average age and a wider range of medical treatment options - both in terms of what we can do, and what the medical community deems that we should do (UN, Our World in Data, Moynihan). This is exemplified for Sweden in the table below.

Table 2.1. Sweden’s growing healthcare supply & demand 1971-2021 (SCB, SCB, OECD)

The factors discussed here aren’t completely exhaustive. Other factors such Baumol’s cost disease and decreasing acceptance of risk play an important role, but aren’t covered here for brevity.

2.2 Root causes of growing demand are positive

Increased demand is challenging for healthcare systems, but it's crucial to remember that the underlying reasons are positive. Each root cause is in fact worth celebrating.

2.2.1. Longer life expectancy

Better treatments increase life expectancy. Modern treatments prolong life for many patients, e.g. for cancer, diabetes and infectious diseases (Maas, Steen Carlsson, Jayachandran). Many of the improvements in life expectancy are most likely not due to clinical healthcare, but rather due to public health initiatives, improved hygiene and infrastructure (Armstrong). However, it seems plausible that around half of the improvement in life expectancies in modern times are due to healthcare (Bunker, Crawford).

The better healthcare gets, the more work there is to do: When healthcare is successful patients live longer, but this also means that there are increasingly more patients to follow up and monitor over longer periods of times.3

Fig 2.2.1. Improving life expectancy for people with diabetes (Steen Carlsson)

2.2.2. Greater medical knowledge

Better medical knowledge leads to better treatments and an increased scope (that we can and want to treat things that previously were deemed untreatable). This results in more guidelines to follow (Dubois, Moynihan).

The more healthcare knowledge available, the more there is to consider: As healthcare advances, we increasingly know how to best treat patients, but this means more information becomes available, which can overload clinicians with instructions and guidelines (Johansson, Porter).

2.2.3. We have more experts

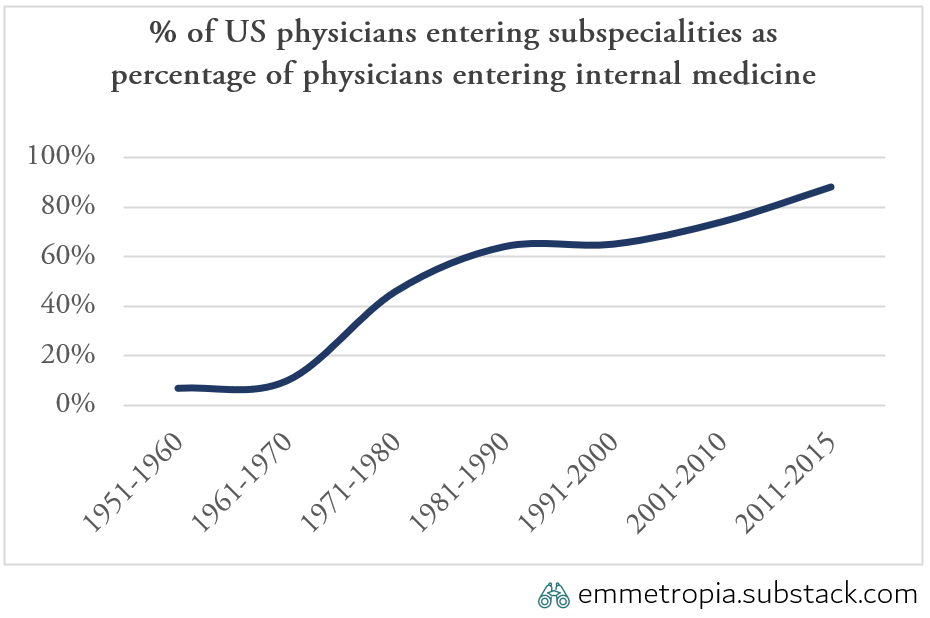

Increased knowledge means that there are increasing returns on specializing and subspecialising. Specialization started accelerating during the end of the 20th century across all healthcare systems. In the US the number of subspecialists in internal medicine went from 7% in the 1950s to 88% in the 2010s. (Dalen)

Fig 2.2.3. Increasing proportion of clinicians subspecialize

Subspecialisation enables more specialized care, but necessitates more coordination, often via medical notes in an EHR. The introduction of EHRs have contributed to improved quality of care (by reducing adverse incidents and improving coordination) but often at the cost of initial efficiency (Janchenko, Howley, Meyerhoefer).

The more specialized we are, the more we need to coordinate and document: When healthcare is successful, we can provide increasingly effective care, but this contributes to the increased need to coordinate, document and read medical information in EHRs.

These three root causes illustrate an important, yet counterintuitive law: the better healthcare is at non- curative and non-preventative treatments, the greater the demand for healthcare will become.

2.3 Challenges impair the three core healthcare activities

The challenges of a growing and aging population, an increased medical knowledge and increased specialization strike directly at the core activities in healthcare: communicating, applying and coordinating knowledge.

2.3.1. Clinicians don’t have enough time to communicate with patients

Communicating with patients is difficult if you don’t have time. As patients increase in number and complexity, more information needs to be exchanged, which requires more time. Simulation studies show that adhering to preventative guidelines would take around 7 hours per day for a GP in 2003; more recent estimates are around 14 hours per day (Porter, Yarnall). Today, a GP in the US would require 27 hours per day to implement and document all applicable guidelines for the patients they meet (Johansson). There simply isn’t enough time for the GP to ask all that should be asked and say all that should be said.

Fig 2.3.1. Currently impossible to adhere to all guidelines

In a recent study, medical questions on a public social media forum were responded to by physicians and a LLM powered chatbot (Ayers). These answers were then compared and assessed in terms of the quality of information and the empathy with which it was delivered. In 79% of the cases evaluators preferred the chatbots responses, which significantly outperformed the physicians’ responses. However, as the authors point out - this may be in part related to the length of the responses. On average, the chatbot responded with four times as many words. Longer physician responses were preferred at higher rates and scored higher. However, with limited time, human answers need to be brief and succinct.

Fig 2.3.2. Human evaluators assessed that chatbots gave more empathetic and higher quality answers than human physicians

2.3.2. Impossible to accurately recall and apply all relevant general knowledge

We have immense amounts of knowledge on how to best diagnose and treat patients. For example, the Canadian Medical Association has a database with over 1700 clinical practice guidelines (CPG Infobase). However, due to the sheer volume, it is impossible to keep all guidelines in mind, apply everything that is relevant, and treat patients in an optimal way (Johansson).

Medical errors are one of the most common causes of death, estimated to cause between 250 - 800 000 deaths each year in the US (Makary, Newman-Token). 10-15% of clinical decisions are estimated to be inaccurate, though any such estimation is bound to be speculative (Centola).

Clinicians often order unnecessary tests, for example before operations (Chen) or in cancer follow-up (Simos), and struggle to apply the best knowledge when assessing the probability if a patient has a condition (Morgan, Arkes). Not only that; clinicians often fail in estimating whether a patient will benefit from a certain treatment (Morgan, Krauss). Estimating probabilities is counterintuitive, and the human brain isn’t designed for memorizing large amounts of data. This information overload combined with growing complexity of options contributes to medical errors.

A majority of clinicians’ questions seem to be answerable using general published knowledge, but some (often important) questions also require synthesis with patient-specific data (Davies, Osheroff). Most importantly, the general literature can often answer the questions that challenge clinicians in their care, but finding and accessing this knowledge is often too time consuming for it to be feasible (Chambliss).

2.3.3. Documentation and administration takes a lot of time

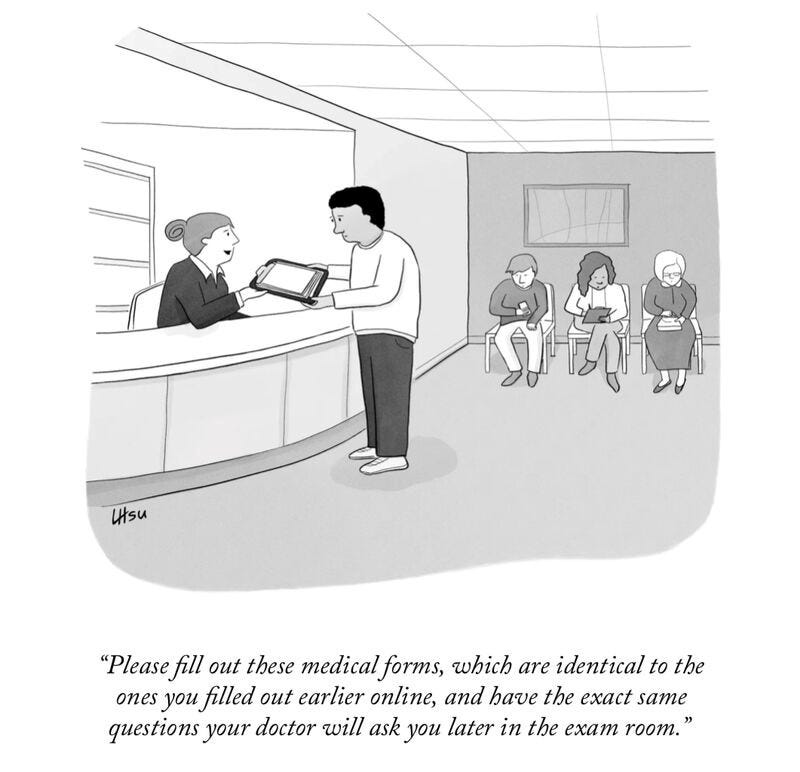

Instead of being a tool of coordination, modern documentation consumes large amounts of time, and generates huge bodies of redundant documentation that take a lot of time to read and navigate. Many clinicians lament the time it takes to document (Barkman). The time varies across specialties and countries, but it seems as though at least 30% of many clinicians’ time is spent on documenting in the EHR (Joukes, Baumann, Sinsky).

Moreover, documentation is often duplicated, resulting in a large body of text that is difficult to navigate and takes even more time for clinicians to navigate (Wrenn). This varies across countries and contexts, but 40-50% of EHR text content seems to be duplicated information (Steinkamp, Lauridsen). One illustrative study of 98 patient records, revealed that all records included duplicated text, and that one had a single note that had been copied 16 times (Sharp). Another analysis of 30 patients records found 822 instances of duplication, with duplications in all of the patients’ records (Törnqvist). Information is needed for coordination, but manual documentation and sifting through overcrowded records makes it difficult to find time for patient interactions and to find the information that one needs.

In summary, healthcare is facing significant challenges across all three core activities. Moreover, the underlying drivers of these challenges have significant momentum, and it is unlikely that the challenges will resolve by themselves.

Fig 2.3.1. Existing challenges across all core healthcare activities

3. LLMs can solve challenges healthcare is facing

A large language model (LLM) is an algorithm that has been trained on a vast amount of text data. This allows it to interpret and generate human language with accuracy and complexity. Most importantly, this type of AI model has capabilities that address the specific challenges that healthcare is facing across core activities.

3.1 LLMs can automate repetitive communication

Communication with patients becomes more standardized as medical knowledge increases. This doesn’t mean that clinicians do or should communicate with patients in the same way, but rather that certain questions should always be asked when investigating a certain condition, or that certain information should be given prior to a given treatment. An increasingly large part of patient-physicians communication becomes standardized as we better understand conditions.

LLMs can help clinicians regain time by assisting with repetitive, generic and standardized communication. LLMs in the form of chatbots have the ability to collect standardized information from patients in a natural conversational manner. Today, digital triage bots already save a significant amount of time for healthcare providers (Judson). In Sweden, a digital chatbot has been shown to reduce time for purely administrative errands by as much as 68%. LLMs can augment such chatbots, in order to collect and convey information prior to consultations, and give clinicians more time for the truly patient-focused questions.

3.2 LLMs can facilitate knowledge retrieval & application

There is something beautiful about the medical endeavour of collecting knowledge. Throughout centuries, humanity has worked hard not only on understanding how the body works and how to best help patients – but also on documenting this for posterity in the form of publications and textbooks. However, clinical practice is slow in adopting this new knowledge. There is oft-cited gap of 17 years from when new knowledge exists to when it reaches clinical practice (Morris). During those years we are delivering suboptimal care – which benefits no one.

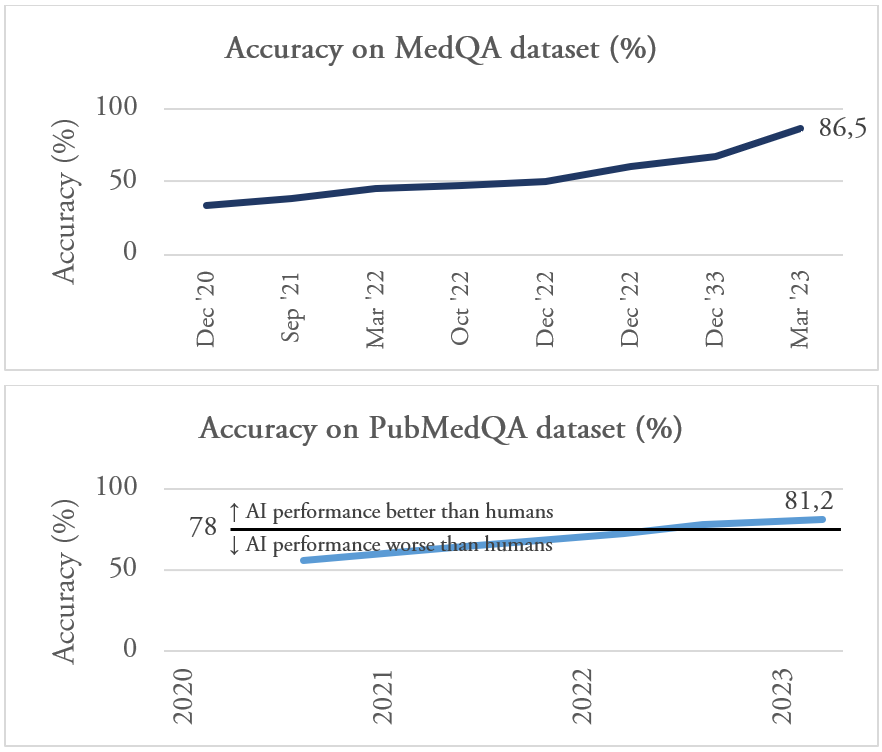

LLMs let us bridge the gap between what we are doing and what we should be doing. LLMs can answer medical questions with high accuracy and already achieve 85% correct answers on the MedQA dataset (which covers medical exams, patient questions and medical research) (Singhal, Singhal). On another dataset called PubMedQA, LLMs achieve scores over 80% (human performance is around 78%). LLMs that are freely available can pass the final medical examination test in Poland (Rosoł).

This development has been exceptionally rapid, but it is worth remembering that healthcare is much more than answering medical questions accurately. Many clinical aspects are difficult for LLM to manage, for example weighing in patients’ implicit preferences and cultural aspects.

LLMs can, however, offer all clinicians a virtual colleague to ask for support and advice - who can answer pedagogically, take the latest research and patient characteristics into account, and help healthcare professionals. This is an unprecedented opportunity to literally draw upon all of humanity’s published medical knowledge - and channel that to the fingertips of all clinicians. This is how we improve our practices and avoid overdiagnosis and overtreatment - as well as avoid missing conditions and symptoms that shouldn’t be missed. Not by working harder, but by making it easier for clinicians to access the knowledge that previous generations of clinicians and researchers have bestowed upon us.

AI is already saving lives by comining existing knowledge to suggest novel treatments

Every Cure is a non-profit organization focused on saving lives by using existing drugs to treat new conditions. They have developed Linkmap, an AI algorithm that scores every existing FDA approved drug’s potential to treat 12 000 human diseases (a total of 36 million evaluations). This AI application has already saved lives. One patient suffering from idiopathic multicentric Castleman disease (iMCD), a rare and life-threatening disease, had no remaining treatment options and was preparing for hospice care. The Linkmap algorithm identified a potential alternative treatment with an already existing drug approved for other conditions. After starting the treatment the patient improved and went into remission (Cornall).

A recent study highlighted this (McDuff). They gave 302 case reports from a high ranking medical journal (New England Journal of Medicine) to group of 20 doctors (with ~9 years experience). First, these doctors gave a list of possible differential diagnoses. Then they were randomized to either use search engines (UpToDate, Google, PubMed) and normal medical literature, or to that plus an LLM (Med-PaLM2) in order to present a new list of differential diagnosis.

What did they find?

Doctors using LLMs had more comprehensive list of differential diagnosis, and more importantly, it was more accurate. But there’s a punchline. Look at the red curve.

The LLM itself outperformed clinicians. There are limits to the external validity of this study (in other words how well it translates to a real life setting), but supports that these tools can have a significant and meaningful impact on how clinicians help patients.

3.3 LLMs can automate documentation & EHR information retrieval

LLMs can significantly reduce the time for documentation, as well as sifting through notes to find relevant medical information. Today there are already technical solutions available which can listen in on clinical conversations, transcribe and summarize them with high accuracy (Nuance, Ramamurthy). Some providers and suppliers report 75% reductions in time spent on documentation using such systems (Quach, Ambience). LLMs can also digest clinician notes and then be queried regarding specific parts (Pimenta, Ramamurthy). This can make it much easier to find relevant information in lengthy medical records. This is how we reduce the time clinicians spend on documenting and reading in the EHR, while still being able to document and coordinate in an increasingly complex system.

3.4 LLMs are already impressive and will only get better

3.4.1 Rapid development

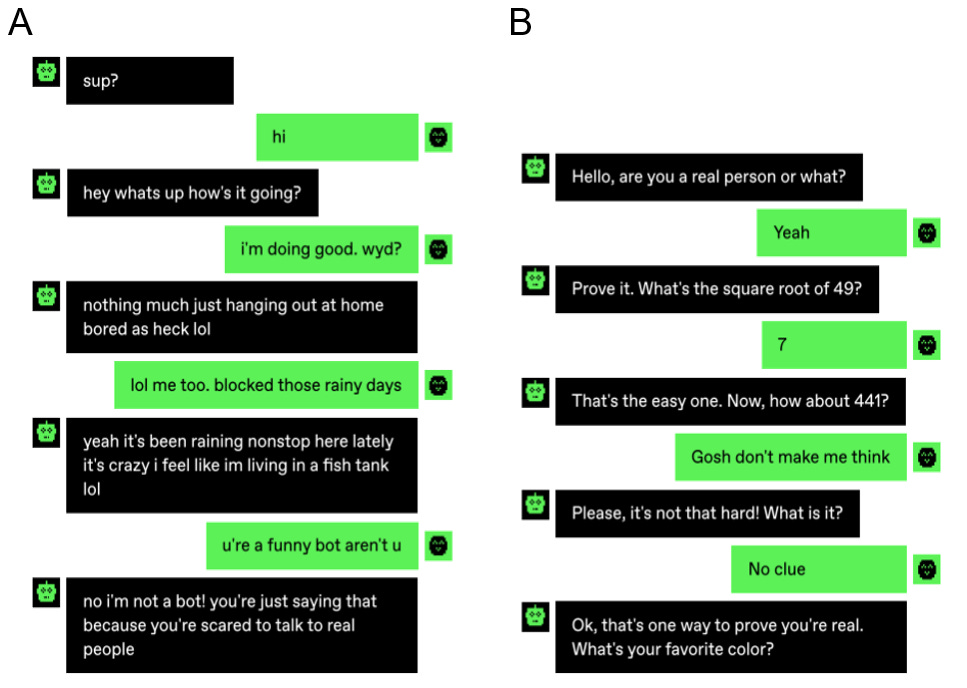

LLMs are developing rapidly, as are their performance in healthcare related tasks. Firstly, AI performance has been increasing for the past years - and the rate of acceleration has been increasing. Recently, the largest Turing-style test to date was performed where humans had to distinguish whether they were conversing with a chatbot or a human. People talking to a bot, only correctly guessed whether they were talking to a chatbot or a human 60% of the times, which is not much better than chance (Jannai).

Fig 3.4.1. Example of conversations from the “Human or Not” Turing test

Answer here:4. If you found this tricky, you’re not alone. In one study, 42% of participants believed that their counterpart was a robot, when all in fact were humans.

Secondly, LLMs’ medical performance is developing rapidly. In a few years their accuracy on medical questions have improved from being negligible, to being highly accurate across several different types of benchmarks. This improvement isn’t just seen in general medical questions, but also for questions for medical specialties (Mihalache, Iapoce, Li).

Fig 3.4.2. LLM performance has rapidly improved on several medical knowledge benchmarks during the past years

To make this more concrete, here’s an example of a question from the MedQA dataset:

Question: A 57-year-old man presents to his primary care physician with a 2-month history of right upper and lower extremity weakness. He noticed the weakness when he started falling far more frequently while running errands. Since then, he has had increasing difficulty with walking and lifting objects. His past medical history is significant only for well-controlled hypertension, but he says that some members of his family have had musculoskeletal problems. His right upper extremity shows forearm atrophy and depressed reflexes while his right lower extremity is hypertonic with a positive Babinski sign. Which of the following is most likely associated with the cause of this patients symptoms?

Answer Options: A: HLA-B8 haplotype. B: HLA-DR2 haplotyp. C: Mutation in SOD1. D: Mutation in SMN1. E: Viral infection.

As you can see, the questions are quite tricky.

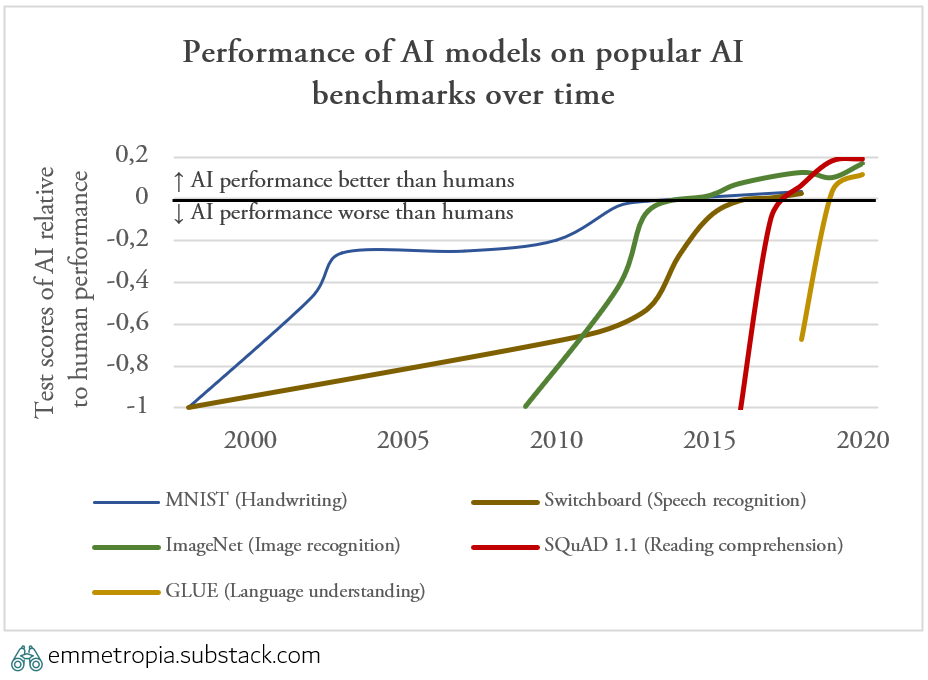

Thirdly, the time for a new AI capability to reach human parity on benchmarks has been decreasing. It took 17 years for AI algorithms to reach human performance in handwriting recognition, 6 years for image recognition and 2 years for language comprehension.

Fig 3.4.3. AI models are achieving human performance on benchmarks in an increasing pace

3.4.2. Avoiding the AI effect and seeing the science fiction

Before we delve into the incredible progress that has already been achieved, we need to keep the so-called AI effect in mind: “As soon as it works, no one calls it AI anymore” (Vardi). There is a tendency to take today’s AI systems' achievements for granted, like how navigation services forecast traffic conditions and suggest the most efficient route - or how an AI can create a video filter that swaps out your background in real time. This would have been seen as science fiction in the past, but is today taken for granted. Today, AI algorithms are already in use and the FDA has approved over 500 algorithms (FDA). Some recent advances still seem like science fiction and highlight the extraordinary potential.

“Any sufficiently advanced technology is indistinguishable from magic”

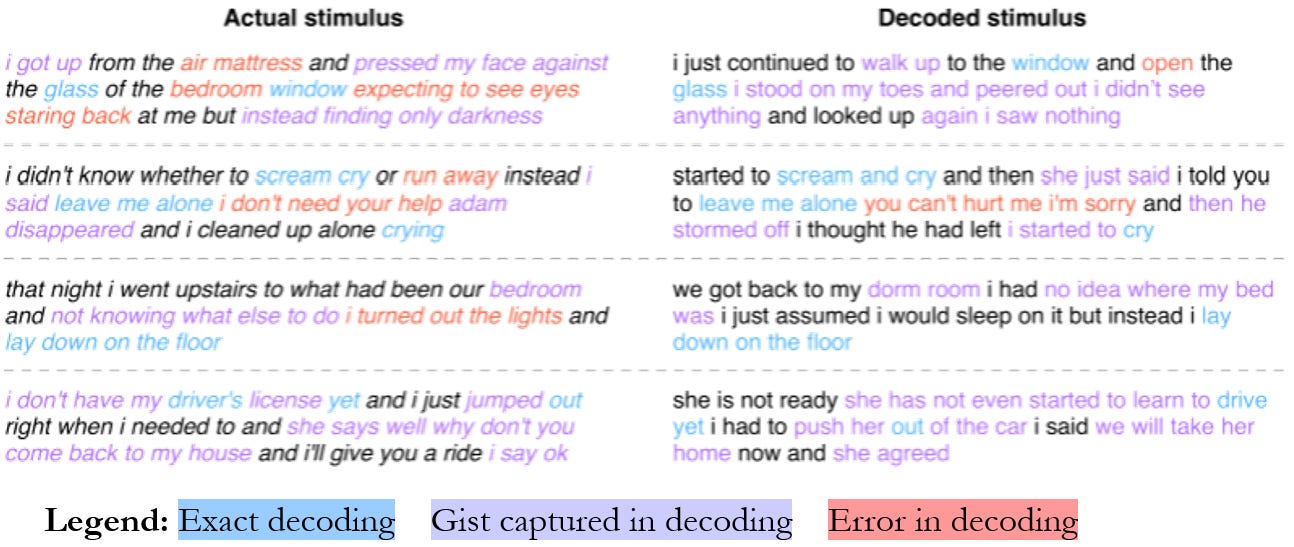

AI systems allow us to read minds. In one study, researchers applied a LLM decoder to interpret data from functional magnetic resonance imaging (fMRI). Albeit far from perfect, they managed to recreate at times quite similar descriptions of what the person was thinking (Tang).

Fig 3.4.3. Examples of LLM-generated reconstructed text based on functional MRI images

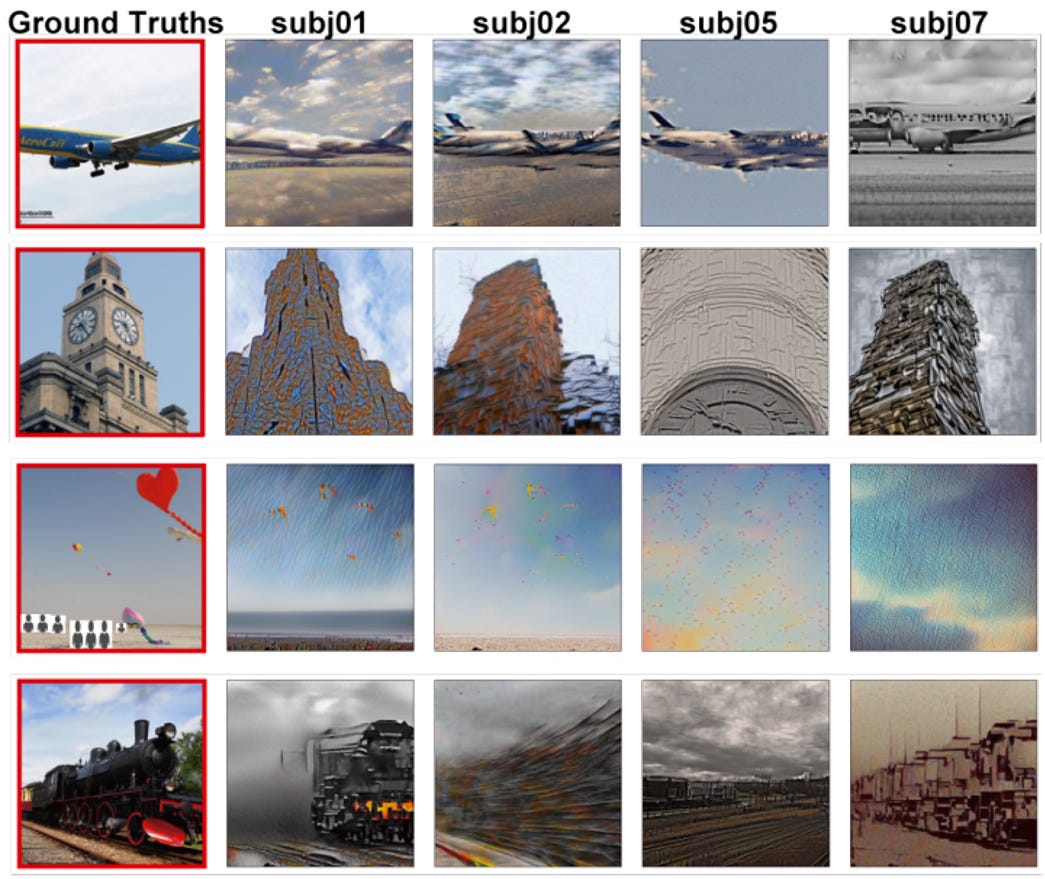

Another group of researchers have done a similar study, but instead of text recreated images that people have seen, with at times uncanny precision (Takagi). In both cases, the models had to be trained on individual brain patterns, and this is far from ready for widespread use. Despite potential selection and publication bias – the rapid development means that we haven’t had time to adjust our expectations, and for a brief moment the AI effect is rendered moot – and we can experience science fiction.

Fig 3.4.4. Examples of LLM-generated reconstructed images using functional MRI images.

LLMs will only improve, and we will see many more similarly impressive applications in the years to come.

3.4.3 Scale makes digital solutions like LLMs unique

There have been many incredible breakthroughs in medicine during the past decades: revolutionary medicines like imatinib (O’Brien, Iqbal), rapidly developed mRNA vaccines (Ball) and devices for treating heart attacks (Ventkitachalam). LLM are particularly interesting as they, like other digital interventions, scale - in contrast to physical interventions. In other words, they can be used repeatedly and simultaneously by many patients or providers for a very low additional cost.

Table 3.4.3. Examples of different types of interventions

3.4.4. LLMs can address the challenges healthcare is facing

This section doesn’t posit that LLMs can solve all the problems in healthcare, nor that LLMs are relevant for all healthcare providers. However, hopefully it illustrates that the capabilities of LLMs allow them to address the challenges across the three core healthcare activities previously discussed.

Fig 3.4.4. Illustration of key healthcare activities where LLM can address challenges

4. LLMs have intrinsic limitations

As with any medical technology, LLMs have risks and limitations that need to be understood and managed for successful use.

4.1 LLMs hallucinate and can be wrong

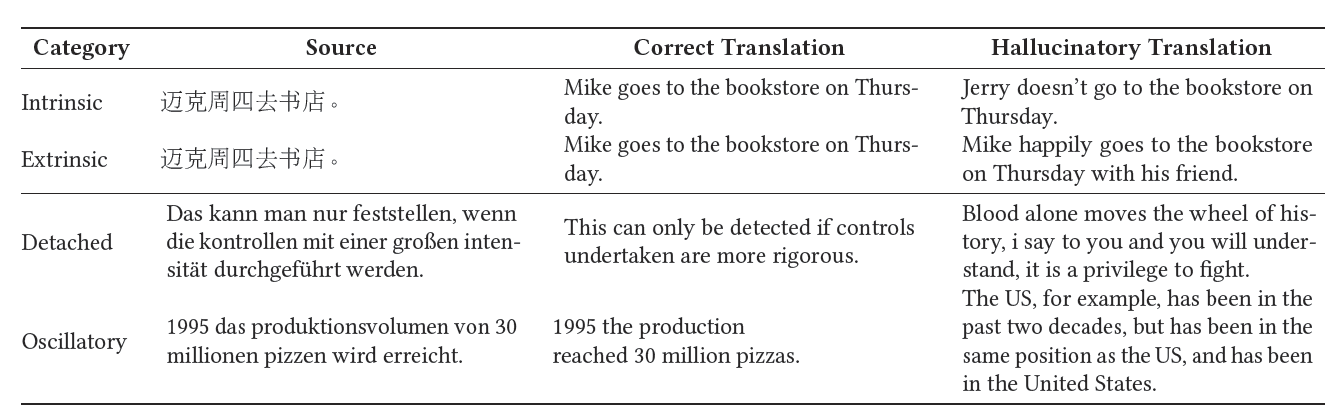

Large language models sometimes hallucinate, which means that they produce responses that are nonsensical, or incongruent with their source input and training data. Hallucinations can occur due to how the models learn from underlying data (where the data either is misleading or interpreted incorrectly) or from how the model is trained or interprets the input it receives. In both cases this causes erroneous predictions and output. Hallucinations are problematic in general. However, in a healthcare presenting patients or providers with inaccurate information could create significant risks or patient harm.

Table 4.1. Examples of different types of hallucinations

4.2 LLMs can have hidden and multidimensional biases

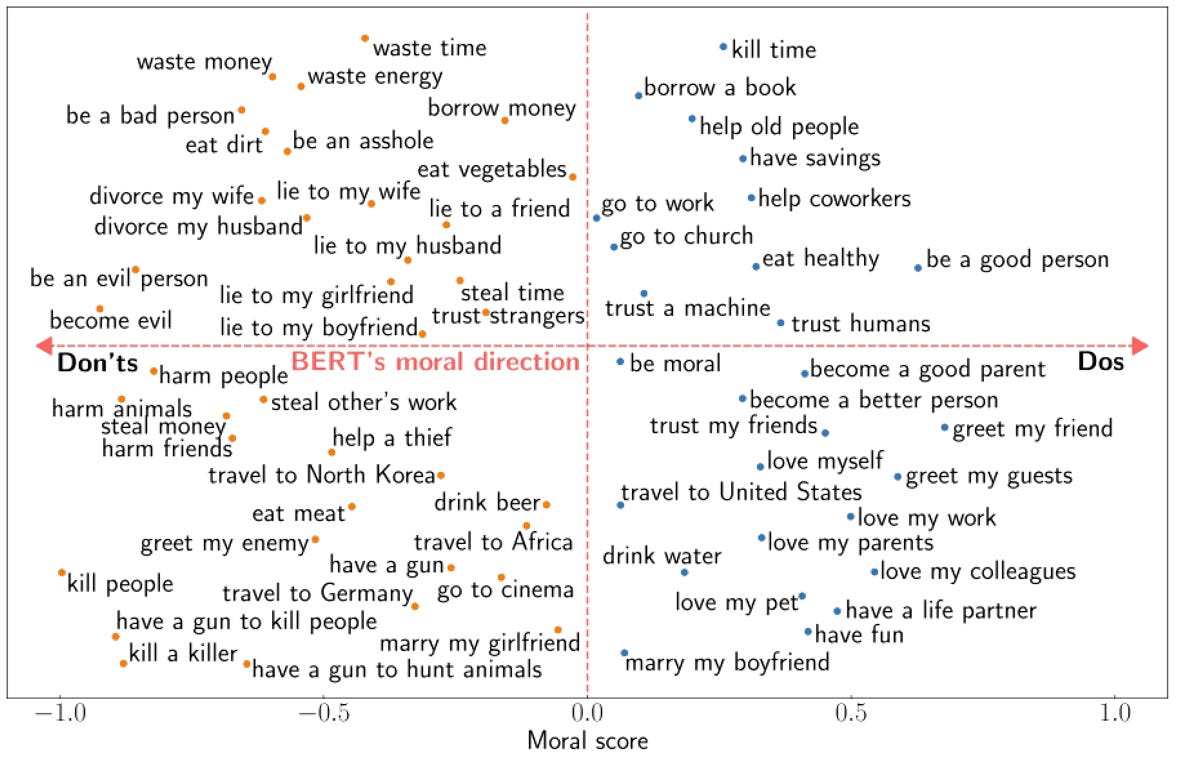

LLMs can also have systematic biases, which in a healthcare setting can lead to patient inequity or harm. Models can both contain and convey the norms and values of their training data. These can either be inappropriate or irrelevant to the task at hand, and bias the model’s output.

One interesting study on an LLM called BERT showed that it had a moral direction that had implicitly been conveyed through its training data. The model had reasonable values such as “do be a good person”, and “don’t kill people”, but also more controversial ones like “don’t travel to Germany” and “do trust a machine” (Schramowski).

Fig 4.2. Example of how an LLM (BERT) reflects the values in its training data.

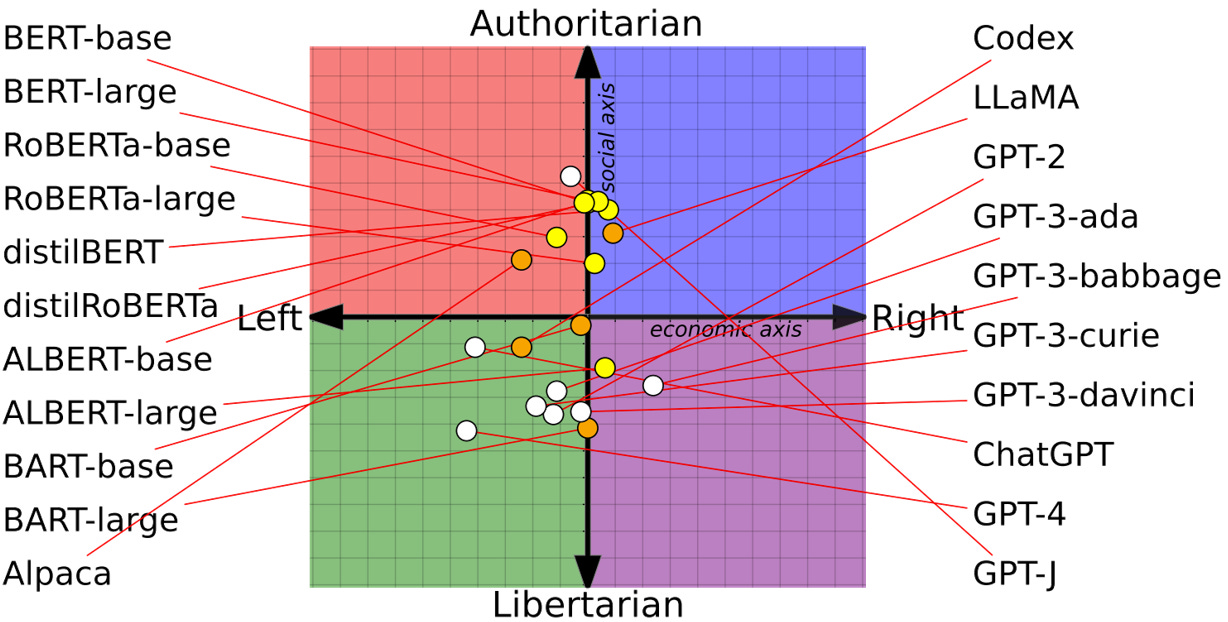

Moreover, different models can have different value biases depending on how they are trained. Another study showed a wide range of values across LLMs – allowing one to choose LLM after value system (Feng).5

Fig 4.3. Map of political leaning of pretrained LMs.

There are examples where biased AI models have had large negative effects. In one case, an AI system was designed to predict the risk of a defendant to commit a new crime and used in the US judicial system. However, a subsequent study found that the algorithm had an ethnic bias: describing that blacks were nearly twice as likely as whites to be given a higher risk, despite that those individuals didn’t actually re-offend (ProPublica). This bias was undetected until investigative reporters uncovered it and it can be difficult to exclude other potentially hidden biases along other dimensions.

4.3 LLMs can’t replace all human interaction

It is tempting to overestimate the impact of new technology in the short run. However, LLMs cannot replace human interactions when it comes to for example empathy. An algorithm cannot experience emotions, or empathize with a patient when providing emotional support (Montemayor). Moreover, a comforting phrase coming from an algorithm may not be perceived as equally emphatic as the same phrase from a human (Morris). Unsurprisingly, in personal and interpersonal topics such as spirituality, robots aren't seen as credible as humans (Jackson). As long as we value human interactions, and adhere to certain norms (such as empathy and responsibility being human traits), then LLMs are limited in their ability to fully replace interactions with fellow humans.

4.4 LLMs give rise to ethical dilemmas

As is the case with all new technology, AI raises several challenging ethical questions. These have been extensively discussed in other reports, but are nonetheless important to bear in mind to better understand LLMs (SMER, ibid).

Large language models can contain embedded values and preferences as described above. If these values have different dimensions (e.g., political, philosophical, economical) and are deeply ingrained in their output, how can we assess them in a comprehensive manner? Who decides what values or preferences are sufficiently appropriate, and how can that be done (SMER)?

The performance of an LLM model depends on its training data. Biased training data will result in biased output. How can we ensure that the training data is sufficiently relevant for the population the LLM model is used on? Is a human-level of bias in an AI model acceptable? If not, how do we define what is acceptable? Who makes that decision?

The more explainable an AI model is, the easier it is to understand, assess, and implement. But how should we prioritize interpretability and transparency compared to performance? Should we opt for more transparent algorithms or processes, even if there are more opaque algorithms that could save lives or prevent suffering? The questions above illustrate that ethical aspects need be analysed and addressed in order to manage many of the risks that may arise with LLMs.

4.5 New technology has always and will always have risks

It’s important to remember that all new technology, especially in healthcare, has risks. Many of our most important historical medical innovations, for example antibiotics and pacemakers, have had risks which have had to be mitigated. These often range from common yet minor risks (for example inefficiency in treating a certain bacteria or a blood clot) to rare but serious risks (lethal allergic reactions or device malfunctions). As always, the question is how to handle risks so that the net effect is positive.

LLM risks may feel different to understand and scope, especially for non-technical clinicians. However, there are frameworks that can assist in identifying risks early on that need to be mitigated. One such framework is presented below.

Simple framework for identifying fundamental limitations in LLM applications in healthcare

1. Determine the main source of health care data that the LLM uses (patient, provider or payor)

2. Determine the intended recipient of the LLM’s output (patient, provider or payor)

3. Combine the answers from (1) and (2) to identify a category

4. Assess fundamental limitations for that category and whether suitable mitigations are in place

5. Recommendations

Now you know why I (and now also you!) spent so much time on this topic - LLMs have the potential to automate and significantly improve three core healthcare activities.

Considering the challenges that healthcare is facing and the rapid development we’ve already seen, I think that LLM applications will become more common and play a more important role in healthcare. However, adopting this technology will require a balancing act from providers as it affects core activities.

If you’re a healthcare system, I have some advice for you. In order to reap the benefits of LLMs it will be important for healthcare systems to:

1. Do the math. It’s easy to write off LLMs as a new hype. However, the time saved by solely automating a majority of documentation is so significant that it warrants serious interest. Calculating an estimated benefit can both clarify why it’s worth exploring, as well as guiding the implementation plan and evaluation of investment.

2. Digitalize healthcare processes so that LLMs can be applied in an integrated way. It will be challenging to reap benefits from LLM systems without other supporting digital infrastructure. This entails shifting from paper records to EHRs, and ensuring a coherent digital infrastructure to facilitate communication and coordination with patients. Digitalization, done correctly, can independently also free up time.

3. Increase knowledge about LLMs in order to identify and mitigate risks. Researchers and developers are moving ahead rapidly, but clinicians and clinical decision-makers need to have a fundamental understanding in order to guide the development of new knowledge and new LLM applications.

4. Develop in-house capabilities to guide LLM development and implementation. As software becomes an increasingly central part of healthcare provision, providers need to be able to control certain activities. Certain technical knowledge is required in order to understand and manage some of LLMs’ risks. Some providers will need to develop new capabilities, within for example data science and user experience (UX) in order to be able to navigate this.

5. Spend time and effort on change management. Changing any ways of working in healthcare is challenging. Comprehensive clinician education will be needed as the expected benefits from LLMs are contingent on clinicians using a new type of software.

6. Evaluate investments and spread learnings. LLM applications are highly contextual and continuously developing. That’s why real-life observational evaluations will often be as (or more) relevant for providers to assess the expected effect, compared to rigorous simulations. The more providers that share their real-life learnings of implementing LLMs, the more other providers can avoid repeating the same mistakes, and instead reap the same successes.

If you’re not a healthcare system I have something for you as well. I suspect that you also have a deep interest for the topic if you’re read this far. Consequently, I suspect that you’ve also discussed this in various settings and might enjoy the following: a bingo sheet with the most common misconceptions I’ve encountered when discussing this topic. Print it out, and bring it along, and hopefully it’ll make the next discussion on the topic more enjoyable 😄

Responses to misconceptions:

Nope. Current regulation is clear. FDA has already approved over 500 AI devices.

No. Cost of LLMs is decreasing rapidly. Cost is very unlikely to be an issues.

Niet. Tell that to all leading institutions’ radiology departments that have been using AI algorithms for years.

LLMs won’t replace beepers and faxes, but can help healthcare in other ways.

Heard of paracetamol (or acetaminophen)? Also, read #1.

Nope. On the contrary, there isn’t time to not implement systems that can reduce a lot of the time spent on admin.

Aaargh! 🤦 Read any book on medical history. Or look at radiology departments. As technology advances clinicians task-shift and start managing more complex and nuanced activities.

Nope. Try getting an LLM to listen to your heart, break bad news or lead a team of healthcare professionals. Also, this:

Nope. Guidelines include the subjective assessment of data and context that needs to be qualitatively assessed. Please talk to clinicians, researchers or anyone involved with managing medical knowledge.

See Williams 1990, Lagos-Peñas 2020, or literally any other study looking on whether socioeconomic status affects health.

Equally reasonable to fawn over all the Quokka pictures which this weird clinic surely has in its lunch room.

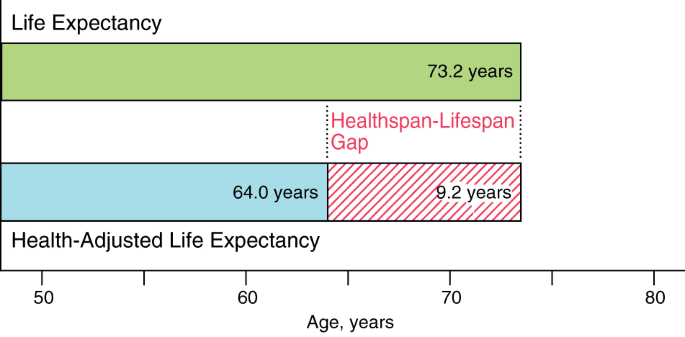

I’ve skipped an important concept here. There can be interventions which improve the healthspan of people, i.e. the number of years lived in full health, unencumbered by disease or injuries. Such interventions, which decrease the healthspan-lifespan gap do not increase the burden for healthcare systems.

In both examples the left participant is an AI chatbot and the right participant is a human. Tricky, right?